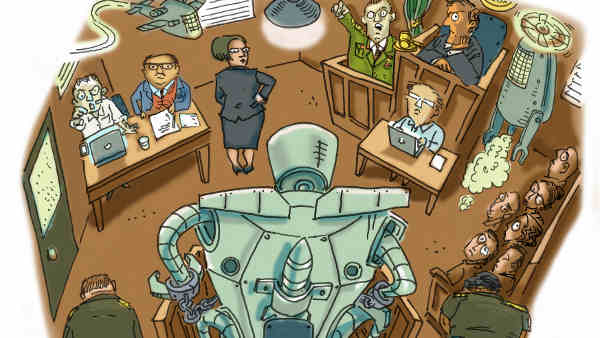

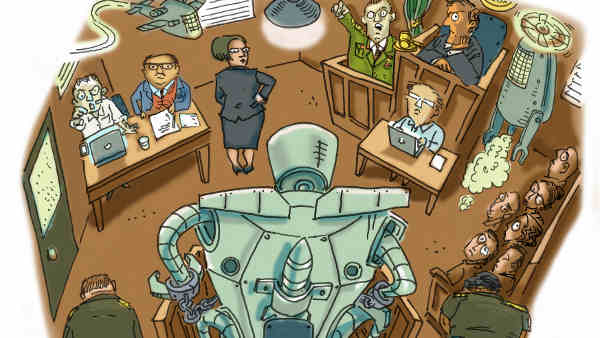

Future of Crime: Attack of the Killer Robots

Although they do not exist yet, the rapid movement of technology in that direction has attracted international attention and concern toward “killer robots.”

Programmers, manufacturers, and military personnel could all escape liability for unlawful deaths and injuries caused by fully autonomous weapons, or “killer robots,” humanitarian organization Human Rights Watch (HRW) said in a report released today.

The report was issued in advance of a multilateral meeting on the weapons at the United Nations in Geneva.

The 38-page report, “Mind the Gap: The Lack of Accountability for Killer Robots,” details significant hurdles to assigning personal accountability for the actions of fully autonomous weapons under both criminal and civil law.

[ Also Visit: Robojit and the Sand Planet – The Story of the Future Universe ]

It also elaborates on the consequences of failing to assign legal responsibility. The report is jointly published by Human Rights Watch and Harvard Law School’s International Human Rights Clinic.

“No accountability means no deterrence of future crimes, no retribution for victims, no social condemnation of the responsible party,” said Bonnie Docherty, senior Arms Division researcher at Human Rights Watch and the report’s lead author. “The many obstacles to justice for potential victims show why we urgently need to ban fully autonomous weapons.”

[ Can Humans and Robots Coexist? ]

Fully autonomous weapons would go a step beyond existing remote-controlled drones as they would be able to select and engage targets without meaningful human control.

Precursors to these weapons, such as armed drones, are being developed and deployed by nations including China, Israel, South Korea, Russia, the United Kingdom, and the United States.

Human Rights Watch is a co-founder of the Campaign to Stop Killer Robots and serves as its coordinator. The international coalition of more than 50 nongovernmental organizations calls for a preemptive ban on the development, production, and use of fully autonomous weapons.

[ Robot Astronaut Kirobo Wins Guinness World Records Titles ]

The report will be distributed at a major international meeting on “lethal autonomous weapons systems” at the UN in Geneva from April 13 to 17, 2015.

A key concern with fully autonomous weapons is that they would be prone to cause civilian casualties in violation of international humanitarian law and international human rights law.

The lack of meaningful human control that characterizes the weapons would make it difficult to hold anyone criminally liable for such unlawful actions, warns Human Rights Watch.